Center for Excellence in Teaching and Learning

The Center for Excellence in Teaching and Learning promotes exemplary teaching and meaningful learning at the University of Mississippi. We serve the UM teaching community by supporting the awareness and adoption of inclusive, evidence-based teaching practices and acting as a hub for the study and dissemination of research on teaching.

Explore our programs

For Faculty

We offer a variety of programs for UM faculty in any discipline to support your growth as an instructor.

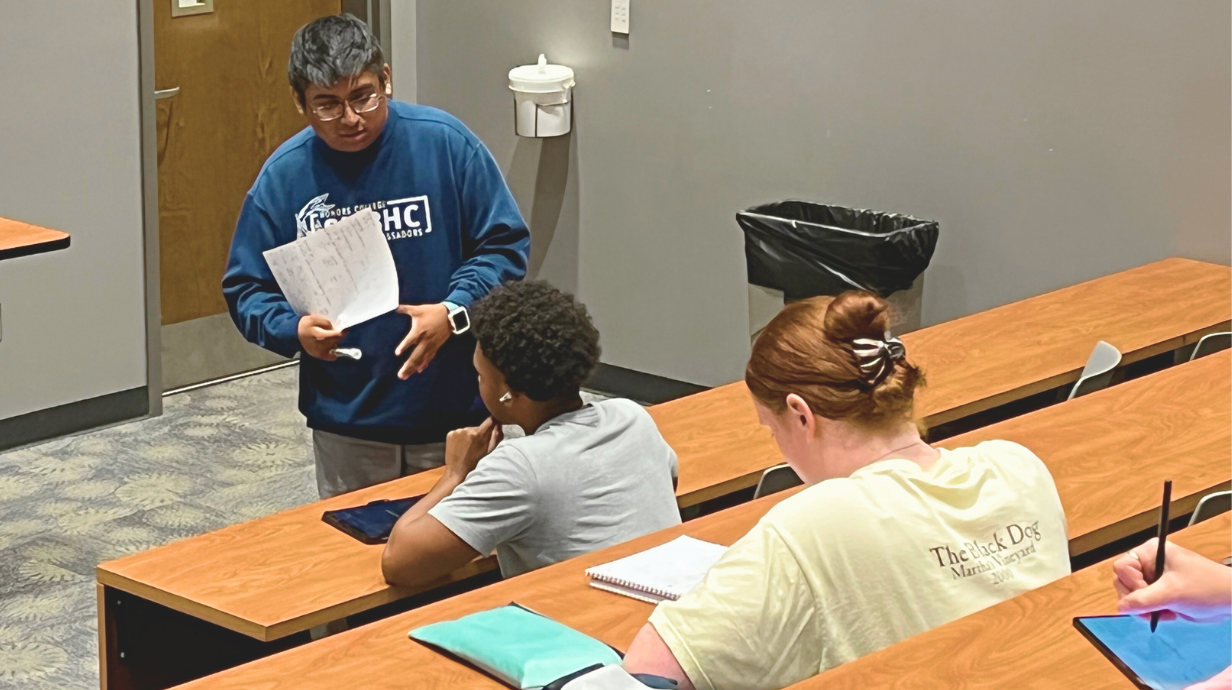

For Graduate Students

Whether you're an instructor, teaching assistant, or aspiring teacher, CETL is here to support your teaching development.

For Undergraduates

Supplemental Instruction (SI) aims to boost student success by providing weekly, structured review sessions for historically difficult classes.

Keep up with CETL

We send a monthly newsletter to the campus teaching community and share event recaps, resources, and news about CETL on our public blog.